Thank you for visiting nature.com. You are using a browser version with limited support for CSS. To obtain the best experience, we recommend you use a more up to date browser (or turn off compatibility mode in Internet Explorer). In the meantime, to ensure continued support, we are displaying the site without styles and JavaScript.

- View all journals

- Explore content

- About the journal

- Publish with us

- Sign up for alerts

- Review Article

- Published: 01 October 2003

Working memory: looking back and looking forward

- Alan Baddeley 1

Nature Reviews Neuroscience volume 4 , pages 829–839 ( 2003 ) Cite this article

50k Accesses

3229 Citations

136 Altmetric

Metrics details

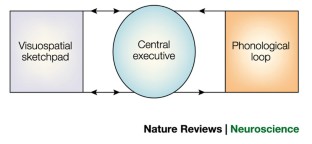

The concept of working memory assumes that a limited capacity system temporarily stores information and thereby supports human thought processes. One prevalent model of working memory comprises three components: a central executive, a verbal storage system called the phonological loop, and a visual storage system called the visuospatial sketchpad.

The phonological loop consists of a store that can hold memory traces for a few seconds, and an articulatory rehearsal process. Retrieval and re-articulation are used to refresh memory traces, and the span of working memory is limited by the amount of material that can be articulated before the first item fades from the store. Word length and similarity between items strongly influence performance on tests of verbal working memory.

Various models have been proposed to account for how serial order is remembered. In chaining models, each item is a cue for the next item, but these models run into problems with sequences in which an item recurs and with similarity effects. Contextual models assume that successive items are associated with a contextual cue or that recall of order is based on positional associations between items.

It has been proposed that the phonological loop evolved to facilitate the acquisition of language. In support of this, phonological loop capacity is a good predictor of second language learning. Future studies seem likely to link the phonological loop more closely to theories of language perception and production.

The visuospatial sketchpad is less well understood than the phonological loop. Spatial and visual working memory also have limited capacity and the two can be dissociated. Visuospatial working memory predicts success in fields such as architecture and engineering.

One model proposes that the sketchpad is divided into two components, a visual cache and a retrieval and rehearsal process called the 'inner scribe', analogous to the storage and articulatory components of the phonological loop.

The central executive is the least understood component of working memory. In one model, control is divided between 'automatic' habits or schemas, and an attentional system called the supervisory activating system that intervenes to overrule habitual control.

To move our concept of the supervisory activating system beyond a simple 'homunculus', we need to specify the processes attributed to it, and then to explain them. The processes needed are to focus, divide and switch attention, and to connect working memory with long-term memory. To account for the latter capacity, a fourth component of working memory has been proposed: the episodic buffer, a limited capacity store that binds information to form integrated episodes.

Neuroimaging and neuropsychology have provided evidence for localization of the components of working memory. The phonological loop is associated with the left temporoparietal region, and visuospatial working memory with analogous areas in the right hemisphere. The central executive is probably associated with the frontal lobes.

Work on the phonological loop, the visuospatial sketchpad and the central executive should be more closely linked to studies on language, visual processing and motor control, and executive control, respectively. In addition, more work is needed on what drives working memory.

The concept of working memory proposes that a dedicated system maintains and stores information in the short term, and that this system underlies human thought processes. Current views of working memory involve a central executive and two storage systems: the phonological loop and the visuospatial sketchpad. Although this basic model was first proposed 30 years ago, it has continued to develop and to stimulate research and debate. The model and the most recent results are reviewed in this article.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

Receive 12 print issues and online access

176,64 € per year

only 14,72 € per issue

Buy this article

- Purchase on SpringerLink

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

The roles of attention, executive function and knowledge in cognitive ageing of working memory

Representation and computation in visual working memory

Long-term memory guides resource allocation in working memory

Andrade, J. Working Memory in Context (Psychology, Hove, Sussex, 2001).

Google Scholar

Miyake, A. & Shah, P. (eds) Models of Working Memory: Mechanisms of Active Maintenance and Executive Control (Cambridge Univ. Press, New York, 1999).

Book Google Scholar

Conway, A. R. A., Jarrold, C., Kane, M. J., Miyake, A. & Towse, J. N. Variation in Working Memory (Oxford Univ. Press, Oxford, in the press). References 1–3 provide excellent accounts of the current states of research on working memory, with reference 2 representing a range of theoretical approaches and highlighting their common features, reference 1 containing the view of a range of young British researchers on the strengths and weaknesses of the multi-component model, and reference 3 having more North American contributors and better reflecting approaches based on individual differences.

Miyake, A. & Shah, P. in Models of Working Memory: Mechanisms of Active Maintenance and Executive Control (eds Miyake, A. & Shah, P.) 28–61 (Cambridge Univ. Press, New York, 1999).

Cowan, N. in Models of Working Memory: Mechanisms of Active Maintenance and Executive Control (eds Miyake, A. & Shah, P.) 62–101 (Cambridge Univ. Press, New York, 1999). An alternative approach to working memory that focuses on attentional control. This leads to a different emphasis, but is not in any fundamental sense incompatible with a multi-component model.

Nairne, J. S. A feature model of immediate memory. Mem. Cogn. 18 , 251–269 (1990).

Article CAS Google Scholar

Neath, I. Modeling the effects of irrelevant speech on memory. Psychon. Bull. Rev. 7 , 403–423 (2000).

Article CAS PubMed Google Scholar

Nairne, J. S. Remembering over the short-term: the case against the standard model. Annu. Rev. Psychol. 53 , 53–81 (2002).

Article PubMed Google Scholar

Miller, G. A., Galanter, E. & Pribram, K. H. Plans and the Structure of Behavior (Holt, Rinehart & Winston, New York, 1960).

Baddeley, A. D. & Hitch, G. J. in Recent Advances in Learning and Motivation (ed. Bower, G. A.) 47–89 (Academic, New York, 1974).

Hebb, D. O. The Organization of Behavior (Wiley, New York, 1949).

Brown, J. Some tests of the decay theory of immediate memory. Q. J. Exp. Psychol. 10 , 12–21 (1958).

Article Google Scholar

Peterson, L. R. & Peterson, M. J. Short-term retention of individual verbal items. J. Exp. Psychol. 58 , 193–198 (1959).

Melton, A. W. Implications of short-term memory for a general theory of memory. J. Verbal Learn. Verbal Behav. 2 , 1–21 (1963).

Baddeley, A. D. Human Memory: Theory and Practice 2nd edn (Psychology, Hove, Sussex, 1997).

Atkinson, R. C. & Shiffrin, R. M. in The Psychology of Learning and Motivation: Advances in Research and Theory (ed. Spence, K. W.) 89–195 (Academic, New York, 1968).

Craik, F. I. M. & Lockhart, R. S. Levels of processing: a framework for memory research. J. Verbal Learn. Verbal Behav. 11 , 671–684 (1972).

Shallice, T. & Warrington, E. K. Independent functioning of verbal memory stores: a neuropsychological study. Q. J. Exp. Psychol. 22 , 261–273 (1970).

Vallar, G. & Papagno, C. in Handbook of Memory Disorders (eds Baddeley, A. D., Kopelman, M. D. & Wilson, B. A.) 249–270 (Wiley, Chichester, 2002). An excellent summary of the implications of the study of phonological working memory of neuropsychological patient studies.

Conrad, R. Acoustic confusion in immediate memory. B. J. Psychol. 55 , 75–84 (1964).

Conrad, R. & Hull, A. J. Information, acoustic confusion and memory span. B. J. Psychol. 55 , 429–432 (1964).

Baddeley, A. D. Short-term memory for word sequences as a function of acoustic, semantic and formal similarity. Q. J. Exp. Psychol. 18 , 362–365 (1966).

Baddeley, A. D. The influence of acoustic and semantic similarity on long-term memory for word sequences. Q. J. Exp. Psychol. 18 , 302–309 (1966).

Baddeley, A. D. Thomson, N. & Buchanan, M. Word length and the structure of short-term memory. J. Verbal Learn. Verbal Behav. 14 , 575–589 (1975).

Murray, D. J. Articulation and acoustic confusability in short-term memory. J. Exp. Psychol. 78 , 679–684 (1968).

Vallar, G. & Baddeley, A. D. Fractionation of working memory. Neuropsychological evidence for a phonological short-term store. J. Verbal Learn. Verbal Behav. 23 , 151–161 (1984).

Baddeley, A. D. & Wilson, B. Phonological coding and short-term memory in patients without speech. J. Mem. Lang. 24 , 490–502 (1985).

Caplan, D., Rochon, E. & Waters, G. S. Articulatory and phonological determinants of word-length effects in span tasks. Q. J. Exp. Psychol. 45 , 177–192 (1992).

Henson, R. N. A. in Working Memory in Perspective (ed. Andrade, J.) 151–174 (Psychology, Hove, Sussex, 2001). Offers a clear and useful summary of neuroimaging research viewed from a working memory perspective.

Henson, R. N. A., Norris, D. G., Page, M. P. A. & Baddeley, A. D. Unchained memory: error patterns rule out chaining models of immediate serial recall. Q. J. Exp. Psychol. 49 , 80–115 (1996).

Brown, G. D. A. Preece, T. & Hulme, C. Oscillator-based memory for serial order. Psychol. Rev. 107 , 127–181 (2000).

Murdock, B. B. TODAM2: A model for the storage and retrieval of item, associative, and serial order information. Psychol. Rev. 100 , 183–203 (1993).

Henson, R. N. A. Item repetition in short-term memory: Ranschburg repeated. J. Exp. Psychol. Learning Mem. Cogn. 24 , 1162–1181 (1998).

Baddeley, A. D. How does acoustic similarity influence short-term memory? Q. J. Psychol. 20 , 249–264 (1968).

CAS Google Scholar

Burgess, N. & Hitch, G. J. Towards a network model of the articulatory loop. J. Mem. Lang. 31 , 429–460 (1992).

Burgess, N. & Hitch, G. J. Memory for serial order: a network model of the phonological loop and its timing. Psychol. Rev. 106 , 551–581 (1999). A good example of one of a range of applications of computational modelling to the study of working memory.

Henson, R. N. A. Short-term memory for serial order. The start–end model. Cogn. Psychol. 36 , 73–137 (1998).

Page, M. A. & Norris, D. The primacy model: a new model of immediate serial recall. Psychol. Rev. 105 , 761–781 (1998).

Logie, R. H., Della Sala, S., Laiacona, M., Chalmers, P. & Wynn, V. Group aggregates and individual reliability: the case of verbal short-term memory. Mem. Cogn. 24 , 305–321 (1996). Probably the only study to attempt to quantify the magnitude and robustness of the phonological similarity and word-length effects.

Larsen, J. D. & Baddeley, A. D. Disruption of verbal STM by irrelevant speech, articulatory suppression and manual tapping: do they have a common source? Q. J. Exp. Psychol. (in the press).

Neath, I. Farley, L. A. & Surprenant, A. M. Directly assessing the relationship between speech and articulatory suppression. Q. J. Exp. Psychol. (in the press).

Hanley, J. R. & Bakopoulou, E. Irrelevant speech, articulatory suppression and phonological similarity: a test of the phonological loop model and the feature model. Psychon. Bull. Rev. 10 , 435–444 (2003). This study emphasizes the role of strategy in working memory, demonstrating powerful effects of strategic instructions to subjects.

Lovatt, P. & Avons, S. E. in Working Memory in Perspective (ed. Andrade, J.) 199–218 (Psychology, Hove, Sussex, 2001).

Brown, G. D. A. & Hulme, C. Modeling item length effects in memory span: no rehearsal needed? J. Mem. Lang. 34 , 594–621 (1995).

Neath, I. & Nairne, J. S. Word-length effects in immediate memory: overwriting trace-decay theory. Psychon. Bull. Rev. 2 , 429–441 (1995).

Cowan, N., Baddeley, A. D., Elliott, E. M. & Norris, J. List composition and the word length effect in immediate recall: a comparison of localist and globalist assumptions. Psychol. Bull. Rev. 10 , 74–79 (2003).

Baddeley, A. D., Chincotta, D., Stafford, L. & Turk, D. Is the word length effect in STM entirely attributable to output delay? Evidence from serial recognition. Q. J. Exp. Psychol. 55A , 353–369 (2002).

Dosher, B. A. & Ma, J. J. Output loss or rehearsal loop? Output-time versus pronunciation-time limits in immediate recall for forgetting-matched materials. J. Exp. Psychol. Learn. 24 , 316–335 (1998).

Lovatt, P., Avons, S. E. & Masterson, J. The word length effect and disyllabic words. Q. J. Exp. Psychol. 53 , 1–22 (2000).

Baddeley, A. D. & Andrade, J. Reversing the word-length effect: a comment on Caplan, Rochon and Waters. Q. J. Exp. Psychol. 47A , 1047–1054 (1994).

Caplan, D. & Waters, G. S. Articulatory length and phonological similarity in span tasks: a reply to Baddeley and Andrade. Q. J. Exp. Psychol. 47A , 1055–1062 (1994).

Service, E. Effect of word length on immediate serial recall depends on phonological complexity, not articulatory duration. Q. J. Exp. Psychol. 51A , 283–304 (1998).

Mueller, S. T., Seymour, T. L., Kieras, D. E. & Meyer, D. E. Theoretical implications of articulatory duration, phonological similarity, and phonological complexity in verbal working memory. J. Exp. Psychol. Learn. Mem. Cogn. (in the press). The most careful and sophisticated analysis of the roles of spoken duration and phonological similarity in verbal STM, leading to a clear and simple theoretical conclusion. A good account of potential pitfalls in this area of research is also provided.

Colle, H. A. Auditory encoding in visual short-term recall: effects of noise intensity and spatial location. J. Verbal Learn. Verbal Behav. 19 , 722–735 (1980).

Jones, D. M. in Attention: Selection, Awareness and Control (eds Baddeley, A. D. & Weiskrantz, L.) 87–104 (Clarendon, Oxford, 1993).

Jones, D. M. & Macken, W. J. Irrelevant tones produce an irrelevant speech effect: implications for phonological coding in working memory. J. Exp. Psychol. Learn. Mem. Cogn. 19 , 369–381 (1993).

Salame, P. & Baddeley, A. D. Disruption of short-term memory by unattended speech: implications for the structure of working memory. J. Verbal Learn. Verbal Behav. 21 , 150–164 (1982).

Colle, H. A. & Welsh, A. Acoustic masking in primary memory. J. Verbal Learn. Verbal Behav. 15 , 17–32 (1976).

Salame, P. & Baddeley, A. D. Phonological factors in STM: similarity and the unattended speech effect. Bull. Psychon. Soc. 24 , 263–265 (1986).

Jones, D. M. & Macken, W. J. Phonological similarity in the irrelevant speech effect. Within- or between-stream similarity? J. Exp. Psychol. Learn. Mem. Cogn. 21 , 103–115 (1995).

Larsen, J. D. Baddeley, A. D. & Andrade, J. Phonological similarity and the irrelevant speech effect: implications for models of short-term verbal memory. Memory 8 , 145–157 (2000).

Le Compte, D. C. & Shaibe, D. M. On the irrelevance of phonological similarity to the irrelevant speech effect. Q. J. Exp. Psychol. 50A , 100–118 (1997).

Jones, D., Beaman, M. & Macken, W. J. in Models of Short-Term Memory (ed. Gathercole, S.) 209–237 (Psychology, Hove, Sussex, 1996).

Page, M. A. & Norris, D. The irrelevant sound effect: what needs modeling and a tentative model. Q. J. Exp. Psychol. (in the press).

Macken, W. J. & Jones, D. M. Reification of phonological storage. Q. J. Exp. Psychol. (in the press).

Baddeley, A. D., Gathercole, S. E. & Papagno, C. The phonological loop as a language learning device. Psychol. Rev. 105 , 158–173 (1998). Summarizes the evidence supporting the view that the phonological loop serves as a device for facilitating language acquisition.

Papagno, C., Valentine, T. & Baddeley, A. D. Phonological short-term memory and foreign language vocabulary learning. J. Mem. Lang. 30 , 331–347 (1991).

Papagno, C. & Vallar, G. Phonological short-term memory and the learning of novel words: the effect of phonological similarity and item length. Q. J. Exp. Psychol. 44A , 47–67 (1992).

Service, E. Phonology, working memory and foreign-language learning. Q. J. Exp. Psychol. 45A , 21–50 (1992).

Atkins, W. B. & Baddeley, A. D. Working memory and distributed vocabulary learning. App. Psycholinguistics 19 , 537–552 (1998).

Gathercole, S. E. & Adams, A. M. Children's phonological working memory: contributions of long-term knowledge and rehearsal. J. Mem. Lang. 33 , 672–788 (1994).

Gathercole, S. E. & Baddeley, A. D. Evaluation of the role of phonological STM in the development of vocabulary in children: a longitudinal study. J. Mem. Lang. 28 , 200–213 (1989).

Gathercole, S. E. & Baddeley, A. D. Phonological memory deficits in language-disordered children: is there a causal connection? J. Mem. Lang. 29 , 336–360 (1990).

Gathercole, S. E., Pickering, S., Hall, M. & Peaker, S. Dissociable lexical and phonological influences on serial recognition and serial recall. Q. J. Exp. Psychol. 54A , 1–30 (2001).

Houghton, G. Hartley, T. & Glasspool, D. W. in Models of Short-Term Memory (ed. Gathercole, S. E.) 101–128 (Psychology, Hove, Sussex, 1996).

Gathercole, S. E. Is nonword repetition a test of phonological memory or long-term knowledge? It all depends on the nonwords. Mem. Cogn. 23 , 83–94 (1995).

Thorn, A. S. C., Gathercole, S. E. & Frankish, C. R. Language familiarity effects in short-term memory: the role of output delay and long-term knowledge. Q. J. Exp. Psychol. 55A , 1363–1383 (2002).

O'Regan, J. K., Rensink, R. A. & Clark, J. J. Change-blindness as a result of 'mudsplashes'. Nature 398 , 34–34 (1999).

Simons, D. J. & Levin, D. T. Change blindness. Trends Cogn. Sci. 1 , 261–267 (1997).

O'Regan, J. K. Solving the real mysteries of visual-perception — the world as an outside memory. Can. J. Psychol. 46 , 461–488 (1992).

Luck, S. J. & Vogel, E. K. The capacity of visual working memory for features and conjunctions. Nature 390 , 279–281 (1997).

Vogel, E. K., Woodman, G. F. & Luck, S. J. Storage of features, conjunctions and objects in visual working memory. J. Exp. Psychol. Hum. Percept. Perf. 27 , 92–114 (2001).

Wheeler, M. E. & Treisman, A. M. Binding in short-term visual memory. J. Exp. Psychol. Gen. 131 , 48–64 (2002). A good example of the recent tendency for prominent researchers in visual attention to analyse related processes of visuospatial working memory.

Milner, B. Inter-hemispheric differences in the locatisation of psychological processes in man. B. Med. Bull. 27 , 272–277 (1971).

Della Sala, S., Gray, C., Baddeley, A. D., Allamano, N. & Wilson, L. Pattern span: a tool for unwelding visuo-spatial memory. Neuropsychologia 37 , 1189–1199 (1999).

Della Sala, S. & Logie, R. H. in Handbook of Memory Disorders (eds Baddeley, A. D., Kopelman, M. D. & Wilson, B. A.) 271–292 (Wiley, Chichester, 2002)

Smith, E. E. et al. Spatial versus object working memory: PET investigations. J. Cogn. Neurosci. 7 , 337–358 (1995).

Mishkin, M., Ungerleider, L. G. & Macko, K. O. Object vision and spatial vision: two cortical pathways. Trends Neurosci. 6 , 414 (1983).

Pickering, S. J. Cognitive approaches to the fractionation of visuo-spatial working memory. Cortex 37 , 470–473 (2001).

Smyth, M. M. & Pendleton, L. R. Space and movement in working memory. Q. J. Exp. Psychol. 42A , 291–304 (1990).

Logie, R. H. Visuo-spatial processing in working memory. Q. J. Exp. Psychol. 38A , 229–247 (1986).

Quinn, G. & McConnell, J. Irrelevant pictures in visual working memory. Q. J. Exp. Psychol. 49A , 200–215 (1996).

Purcell, A. T. & Gero, J. S. Drawings and the design process. Design Studies 19 , 389–430 (1998).

Verstijnen, I. M., van Leeuwen, C., Goldschimdt, G., Haeml, R. & Hennessey, J. M. Creative discovery in imagery and perception: combining is relatively easy, restructuring takes a sketch. Acta Psychol. 99 , 177–200 (1998).

Ghiselin, B. The Creative Process (Mentor, New York, 1952).

Finke, R. & Slayton, K. Explorations of creative visual synthesis in mental imagery. Mem. Cogn. 16 , 252–257 (1988).

Finke, R., Ward, T. B. & Smith, S. M. Creative Cognition: Theory, Research and Applications (MIT Press, Cambridge, Massachusetts, 1992)

Pearson, D. G., Logie, R. H. & Gilhooly, K. Verbal representations and spatial manipulation during mental synthesis. Eur. J. Cogn. Psychol. 11 , 295–314 (1999).

Pearson, D. G. in Working Memory in Perspective (ed. Andrade, J.) 33–59 (Psychology, Hove, Sussex, 2001).

Brandimonte, M. & Gerbino, W. Mental image reversal and verbal recoding: when ducks become rabbits. Mem. Cogn. 21 , 23–33 (1993).

Brandimonte, M., Hitch, G. J. & Bishop, D. Verbal recoding of visual stimuli in pairs mental image transformations. Mem. Cogn. 20 , 449–455 (1992).

Logie, R. H. Visuo-spatial Working Memory (Lawrence Erlbaum, Hove, Sussex, 1995).

Goldman-Rakic, P. W. Topography of cognition: parallel distributed networks in primate association cortex. Annu. Rev. Neurosci. 11 , 137–156 (1988). A review of studies in which single-unit recording in awake monkeys is used to analyse the nature of visuospatial working memory.

Goldman-Rakic, S. The prefrontal landscape: implications of functional architecture for understanding human mentation and the central executive. Phil. Trans. R. Soc. Lond. B 351 , 1445–1453 (1996).

Baddeley, A. D. Working Memory (Oxford Univ. Press, Oxford, 1986).

Norman, D. A. & Shallice, T. in Consciousness and Self-regulation. Advances in Research and Theory (eds Davidson, R. J., Schwarts, G. E. & Shapiro, D.) 1–18 (Plenum, New York, 1986).

Shallice, T. From Neuropsychology to Mental Structure (Cambridge Univ. Press, Cambridge, 1988).

Shallice, T. in Principles of Frontal Lobe Function (eds Stuss, D. T. & Knight, R. T.) 261–277 (Oxford Univ. Press, New York, 2002).

Shallice, T. & Burgess, P. W. Deficits in strategy application following frontal lobe damage in men. Brain 114 , 727–741 (1991).

Bargh, J. A. & Ferguson, M. J. Beyond behaviorism: on the automaticity of higher mental processes. Psychol. Bull. 126 , 925–945 (2000). This article makes a strong case for the importance of implicit factors in determining social behaviour.

Bargh, J. A., Chen, M. & Burrows, L. Automaticity of social behavior: direct effects of trait construct and stereotype activation on action. J. Personality Soc. Psychol. 71 , 230–244 (1996).

Chartrand, T. L. & Bargh, J. A. The chameleon effect: the perception-behavior link and social interaction. J. Personality Soc. Psychol. 76 , 893–910 (1999).

Chartrand, T. L. & Bargh, J. A. in Self and Motivation: Emerging Psychological Perspectives (eds Tesser, A., Stapel, D. A. & Wood, J. V.) 13–41 (American Psychological Association, Washington DC, 2002).

Baumeister, R. F. & Exline, J. J. Self control, morality and human strength. J. Soc. Clin. Psychol. 19 , 29–42 (2000).

Baumeister, R. F., Bratslavsky, E., Muraven, M. & Tice, D. M. Ego-depletion: is the active self a limited resource? J. Personality Soc. Psychol. 74 , 1252–1265 (1998).

Muraven, M. & Baumeister, R. F. Self regulation and depletion of limited resources: does self control resemble a muscle? Psychol. Bull. 126 , 247–259 (2000).

McDougall, W. Outline of Psychology (Scribner, New York, 1923)

Attneave, F. in Sensory Communication (ed. Rosenblith, W.) 777–782 (MIT Press, Cambridge, Massachussetts, 1960).

Baddeley, A. D. Exploring the central executive. Q. J. Exp. Psychol. 49A , 5–28 (1996).

Baddeley, A. D., Bressi, S., Della Sala, S., Logie, R. & Spinnler, H. The decline of working memory in Alzheimer's disease: a longitudinal study. Brain 114 , 2521–2542 (1991).

Baddeley, A. D., Baddeley, H. A., Bucks, R. S. & Wilcock, G. K. Attentional control in Alzheimer's disease. Brain 124 , 1492–1508 (2001).

Baddeley, A. D. & Wilson, B. A. Prose recall and amnesia: implications for the structure of working memory. Neuropsychologia 40 , 1737–1743 (2002).

Logie, R. H., Della Sala, S., Wynn, V. & Baddeley, A. D. Visual similarity effects in immediate serial recall. Q. J. Exp. Psychol. 53A , 626–646 (2000).

Baddeley, A. D. & Andrade, J. Working memory and the vividness of imagery. J. Exp. Psychol. Gen. 129 , 126–145 (2000).

Baddeley, A. D. The episodic buffer: a new component of working memory? Trends Cogn. Sci. 4 , 417–423 (2000). This gives a brief account of the argument for assuming a fourth component to the working memory model.

Baars, B. J. A Cognitive Theory of Consciousness (Cambridge Univ. Press, Cambridge, 1988).

Baars, B. J. The conscious access hypothesis: origins and recent evidence. Trends Cogn. Sci. 6 , 47–52 (2002).

Dehaene, S. & Naccache, L. Towards a cognitive neuroscience of consciousness: basic evidence and a workspace framework. Cognition 79 , 1–37 (2001). An excellent review of the neuropsychological and neuroimaging evidence for a limited capacity work-space system that is associated with conscious awareness.

Ericsson, K. A. & Kintsch, W. Long-term working memory. Psychol. Rev. 102 , 211–245 (1995).

Warrington, E. J., Logue, V. & Pratt, R. T. C. The anatomical localisation of selective impairment of auditory verbal short-term memory. Neuropsychologia 9 , 377–387 (1971).

Vallar, G., DiBetta, A. M. & Silveri, M. C. The phonological short-term store-rehearsal system: patterns of impairment and neural correlates. Neuropsychologia 35 , 795–812 (1997).

Paulesu, E., Frith, C. D. & Frackowiak, R. S. J. The neural correlates of the verbal component of working memory. Nature 362 , 342–345 (1993).

Jonides, J. et al. in The Psychology of Learning and Motivation (ed. Medin, D.) 43–88 (Academic, London, 1996). A good account of this group's work on applying neuroimaging to the study of both the phonological and visuospatial subsystems of working memory.

Jonides, J. & Smith, E. E. in Cognitive Neuroscience (ed. Rugg, M. D.) 243–276 (Psychology, Hove, Sussex, 1997).

Awh, E. et al. Dissociation of storage and retrieval in verbal working memory: evidence from positron emission tomography. Psychol. Sci. 7 , 25–31 (1996).

Smith, E. E. & Jonides, J. Working memory: a view from neuroimaging. Cogn. Psychol. 33 , 5–42 (1997).

Smith, E., Jonides, J. & Koeppe, R. A. Dissociating verbal and spatial working memory using PET. Cereb. Cortex 6 , 11–20 (1996).

DeRenzi, E. & Nichelli, P. Verbal and non-verbal short-term memory impairment following hemispheric damage. Cortex 11 , 341–353 (1975).

Hanley, J. R., Young, A. W. & Pearson, N. A. Impairment of the visuospatial sketch pad. Q. J. Exp. Psychol. 43A , 101–125 (1991).

Kosslyn, S. M. et al. Visual mental imagery activates topographically organised cortex: PET investigations. J. Cogn. Neurosci. 5 , 263–287 (1993).

Jonides, J. et al. Spatial working memory in humans as revealed by PET. Nature 363 , 623–625 (1993).

Awh, E., Jonides, J. & Reuter-Lorenz, P. A. Rehearsal in spatial working memory. J. Exp. Psychol. Hum. Percept. Perf. 24 , 780–790 (1998).

Wilson, F. A. W. Scalaidhe, S. & Goldman-Rakic, S. Dissociation of object and spatial processing domains in primate prefrontal cortex. Science 260 , 1955–1958 (1993).

Levin, D. N. Warach, J. & Farah M. J. Two visual systems in mental imagery: dissociation of 'what' and 'where' in imagery disorders due to bilateral posterior cerebral lesions. Neurology 35 , 1010–1018 (1985).

Owen, A. M. The functional organisation of working memory processes within the human lateral frontal cortex: the contribution of functional neuroimaging. Eur. J. Neurosci. 9 , 1329–1339 (1997).

Stuss, D. T. & Knight, R. T. Principles of Frontal Lobe Function (Oxford Univ. Press, New York, 2002).

Braver, T. S. et al. A parametric study of prefrontal cortex involvement in human working memory. Neuroimage 5 , 49–62 (1997).

Cohen, J. D. et al. Temporal dynamics of brain activation during a working memory task. Nature 386 , 604–608 (1997).

Frith, C. D., Friston, K. J., Liddle, P. F. & Frackowiak, R. S. J. Willed action in the prefrontal cortex in man: a study with PET. Proc. R. Soc. Lond. B 244 , 241–246 (1991).

Jahanshahi, M., Dirnberger, G., Fuller, R. & Frith, C. D. The role of the dorsolateral prefrontal cortex in random number generation: a study with positron emission tomography. Neuroimage 12 , 713–725 (2000).

Baddeley, A. D. Emslie, H., Kolodny, J. & Duncan, J. Random generation and the executive control of working memory. Q. J. Exp. Psychol. 51A , 818–852 (1998).

Duncan, J. & Owen, A. M. Common regions of the human frontal lobe recruited by diverse cognitive demands. Trends Neurosci. 23 , 475–483 (2000). A meta-analysis of neuroimaging studies of attentional control that argues for the dependence of diverse executive processes on a limited anatomical region of the right frontal lobe.

D'Esposito, M. et al. The neural basis of the central executive system of working memory. Nature 378 , 279–281 (1995).

Klingberg, T. Concurrent performance of two working memory tasks: potential mechanisms of interference. Cereb. Cortex 8 , 593–601 (1998).

Fletcher, T. C. et al. Brain systems for encoding and retrieval of auditory-verbal memory: an in vivo study in humans. Brain 118 , 401–416 (1995).

Goldberg, T. E. et al. Uncoupling cognitive workload and prefrontal cortical physiology: a PET rCBF study. Neuroimage 7 , 296–303 (1998).

Damasio, A. R. Descarte's Error: Emotion, Reason, and the Human Brain (Putnam, New York, 1994).

Hume, D. An Enquiry Concerning Human Understanding (Hackett, Indianapolis, 1772/1993).

Lewin, K. Field Theory in Social Science (Harper, New York, 1951).

Kennard, C. & Swash, M. (Eds) Hierarchies in Neurology: reappraisal of Jacksonian Concept (Springer, Berlin, 1989).

Craik, K. J. W. The Nature of Explanation (Cambridge Univ. Press, London, 1943).

Broadbent, D. E. Levels, hierarchies and the locus of control. Q. J. Exp. Psychol. 29 , 181–201 (1977).

Frith, C. D., Blakemore, S. J. & Wolpert, D. M. Abnormalities in the awareness and control of action. Phil. Trans. R. Soc. Lond. B 355 , 1771–1788 (2000). A stimulating attempt to provide an account of the control of action based on a range of experimental phenomena and neuropsychological deficits. It makes a potentially important case for the possible extension of a model of motor control to the more general control of action and behaviour.

Daneman, M. & Carpenter, A. Individual differences in working memory and reading. J. Verbal Learn. Verbal Behav. 19 , 450–466 (1980).

Daneman, M. & Merikle, M. Working memory and language comprehension: A meta-analysis. Psychon. Bull. Rev. 3 , 422–433 (1996).

Turner, M. L. & Engle, R. W. Is working memory capacity task-dependent? J. Mem. Lang. 28 , 127–154 (1989).

Bayliss, D. M., Jarrold, C., Gunn, D. M. & Baddeley, A. D. The complexities of complex span: Explaining individual differences in working memory in children and adults. J. Exp. Psychol. Gen. 132 , 71–92 (2003).

Ormrod, J. E. & Cochran, K. F. Relationship of verbal ability and working memory to spelling achievement and learning to spell. Reading Res. Instruction 28 , 33–43 (1988).

Kyllonen, C. & Stephens, D. L. Cognitive abilities as the determinants of success in acquiring logic skills. Learn. Individual Diff. 2 , 129–160 (1990).

Kiewra, K. A. & Benton, S. L. The relationship between information processing ability and note-taking. Contemp. Edu. Psychol. 13 , 3–44 (1988).

Engle, R. W. Carullo, J. J. & Collins, K. W. Individual differences in working memory for comprehension and following directions. J. Edu. Res. 84 , 253–262 (1991).

Kyllonen, C. & Christal, R. E. Reasoning ability is (little more than) working memory capacity. Intelligence 14 , 389–433 (1990).

Engle, R. W. Kane, M. J. & Tuholski, S. W. in Models of Working Memory: Mechanisms of Active Maintenance and Executive Control (eds Miyake, A. & Shah, P.) 102–134 (Cambridge Univ. Press, Cambridge, 1999).

Miyake, A., Friedman, N. P., Rettinger, D. A., Shah, P. & Heggarty, M. How are visuo-spatial working memory, executive functioning, and spatial abilities related: latent-variable analysis. J. Exp. Psychol. Gen. 130 , 621–640 (2001).

Miyake, A. et al. The unity and diversity of executive functions and their contributions to complex 'frontal lobe' tasks: a latent variable analysis. Cogn. Psychol. 41 , 49–100 (2000). A clear account of the application of the statistical technique of latent variable analysis to the analysis of executive processes in working memory.

Kane, M. J. & Engle, R. W. The role of prefrontal cortex in working-memory capacity, executive attention and general fluid intelligence: an individual differences perspective. Psychon. Bull. Rev. 4 , 637–671 (2002).

Spearman, C. The Abilities of Man (Macmillan, London, 1927).

Thurstone, L. L. Primary Mental Abilities (Univ. Chicago Press, Chicago, 1938).

Carroll, J. B. Human Cognitive Abilities (Cambridge Univ. Press, Cambridge, 1993).

Herrnstein, R. J. & Murray, C. The Bell Curve: Intelligence and Class Structure in American Life (Free Press, New York, 1994).

Kamin, L. J. Intelligence: The Battle for the Mind (H. J. Eysenck versus Leon Kamin) (Macmillan, London, 1981).

Brooks, L. R. Spatial and verbal components in the act of recall. Can. J. Psychol. 22 , 349–368 (1968).

Martin, J. H. Neuroanatomy: Text and Atlas 2nd edn (Appleton & Lange, Stamford, Connecticut, 1996).

Download references

Author information

Authors and affiliations.

Department of Psychology, University of York, York, YO10 5DD, UK

Alan Baddeley

You can also search for this author in PubMed Google Scholar

Related links

Further information, mit encyclopedia of cognitive sciences.

neural basis of working memory

working memory

(BA). Korbinian Brodmann (1868–1918) was an anatomist who divided the cerebral cortex into numbered subdivisions on the basis of cell arrangements, types and staining properties (for example, the dorsolateral prefrontal cortex contains subdivisions, including BA 46, BA 9 and others). Modern derivatives of his maps are commonly used as the reference system for discussion of brain-imaging findings.

Unable to speak because of defective articulation.

Having an impairment of the ability to perform certain voluntary movements, often including speech.

McDougall proposed the term 'conative' to denote the activity of mental striving or the will, as opposed to cognitive and affective or emotional processes.

Rights and permissions

Reprints and permissions

About this article

Cite this article.

Baddeley, A. Working memory: looking back and looking forward. Nat Rev Neurosci 4 , 829–839 (2003). https://doi.org/10.1038/nrn1201

Download citation

Issue Date : 01 October 2003

DOI : https://doi.org/10.1038/nrn1201

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

This article is cited by

Association of auditory processing abilities and employment in young women.

- Yoshita Sharma

The Egyptian Journal of Otolaryngology (2024)

Pan-cortical electrophysiologic changes underlying attention

- Ronald P. Lesser

- W. R. S. Webber

- Diana L. Miglioretti

Scientific Reports (2024)

Exploring the impact of intensified multiple session tDCS over the left DLPFC on brain function in MCI: a randomized control trial

- M. Pupíková

- I. Rektorová

The role of teaching solfeggio considering memory mechanisms in developing musical memory and hearing of music school students

Current Psychology (2024)

Far Transfer Effects of Trainings on Executive Functions in Neurodevelopmental Disorders: A Systematic Review and Metanalysis

- Clara Bombonato

- Benedetta Del Lucchese

- Chiara Pecini

Neuropsychology Review (2024)

Quick links

- Explore articles by subject

- Guide to authors

- Editorial policies

Sign up for the Nature Briefing newsletter — what matters in science, free to your inbox daily.

- A-Z Publications

Annual Review of Psychology

Volume 66, 2015, review article, the cognitive neuroscience of working memory.

- Mark D'Esposito 1 , and Bradley R. Postle 2

- View Affiliations Hide Affiliations Affiliations: 1 Helen Wills Neuroscience Institute and Department of Psychology, University of California, Berkeley, California 94720; email: [email protected] 2 Departments of Psychology and Psychiatry, University of Wisconsin, Madison, Madison, Wisconsin 53719; email: [email protected]

- Vol. 66:115-142 (Volume publication date January 2015) https://doi.org/10.1146/annurev-psych-010814-015031

- First published as a Review in Advance on September 19, 2014

- © Annual Reviews

For more than 50 years, psychologists and neuroscientists have recognized the importance of a working memory to coordinate processing when multiple goals are active and to guide behavior with information that is not present in the immediate environment. In recent years, psychological theory and cognitive neuroscience data have converged on the idea that information is encoded into working memory by allocating attention to internal representations, whether semantic long-term memory (e.g., letters, digits, words), sensory, or motoric. Thus, information-based multivariate analyses of human functional MRI data typically find evidence for the temporary representation of stimuli in regions that also process this information in nonworking memory contexts. The prefrontal cortex (PFC), on the other hand, exerts control over behavior by biasing the salience of mnemonic representations and adjudicating among competing, context-dependent rules. The “control of the controller” emerges from a complex interplay between PFC and striatal circuits and ascending dopaminergic neuromodulatory signals.

Article metrics loading...

Full text loading...

Literature Cited

- Akil M , Kolachana BS , Rothmond DA , Hyde TM , Weinberger DR , Kleinman JE . 2003 . Catechol- O -methyltransferase genotype and dopamine regulation in the human brain. J. Neurosci. 23 : 2008– 13 [Google Scholar]

- Alvarez GA , Cavanagh P . 2004 . The capacity of visual short-term memory is set both by visual information load and by number of objects. Psychol. Sci. 15 : 106– 11 [Google Scholar]

- Anderson DE , Serences JT , Vogel EK , Awh E . 2014 . Induced alpha rhythms track the content and quality of visual working memory representations with high temporal precision. J. Neurosci. 34 : 7587– 99 [Google Scholar]

- Arnsten A . 1997 . Catecholamine regulation of the prefrontal cortex. J. Psychopharmacol. 11 : 151– 62 [Google Scholar]

- Arnsten KT , Cai JX , Murphy BL , Goldman-Rakic PS . 1994 . Dopamine D1 receptor mechanisms in the cognitive performance of young adult and aged monkeys. Psychopharmacology 116 : 143– 51 [Google Scholar]

- Awh E , Jonides J . 2001 . Overlapping mechanisms of attention and spatial working memory. Trends Cogn. Sci. 5 : 119– 26 [Google Scholar]

- Awh E , Jonides J , Reuter-Lorenz PA . 1998 . Rehearsal in spatial working memory. J. Exp. Psychol.: Hum. Percept. Perform. 24 : 780– 90 [Google Scholar]

- Azuar C , Reyes P , Slachevsky A , Volle E , Kinkingnehun S . et al. 2014 . Testing the model of caudo-rostral organization of cognitive control in the human with frontal lesions. NeuroImage 84 : 1053– 60 [Google Scholar]

- Baddeley A . 1986 . Working Memory New York: Oxford Univ. Press [Google Scholar]

- Baddeley A , Hitch GJ . 1974 . Working memory. Psychology of Learning and Motivation G Bower 8 47– 89 New York: Academic Press The paper that introduces the highly influential multiple-component model of working memory. [Google Scholar]

- Badre D . 2008 . Cognitive control, hierarchy, and the rostro-caudal organization of the frontal lobes. Trends Cogn. Sci. 12 : 193– 200 [Google Scholar]

- Badre D . 2012 . Opening the gate to working memory. Proc. Natl. Acad. Sci. USA 109 : 19878– 79 [Google Scholar]

- Badre D , D'Esposito M . 2007 . Functional magnetic resonance imaging evidence for a hierarchical organization of the prefrontal cortex. J. Cogn. Neurosci. 19 : 2082– 99 [Google Scholar]

- Badre D , D'Esposito M . 2009 . Is the rostro-caudal axis of the frontal lobe hierarchical?. Nat. Rev. Neurosci. 10 : 659– 69 A synthesis of the evidence that the rostral-caudal functional gradient observed along the frontal cortex is hierarchical. [Google Scholar]

- Badre D , Frank MJ . 2012 . Mechanisms of hierarchical reinforcement learning in cortico-striatal circuits 2: evidence from fMRI. Cereb. Cortex 22 : 527– 36 [Google Scholar]

- Badre D , Hoffman J , Cooney JW , D'Esposito M . 2009 . Hierarchical cognitive control deficits following damage to the human frontal lobe. Nat. Neurosci. 12 : 515– 22 [Google Scholar]

- Bannon MJ , Roth RH . 1983 . Pharmacology of mesocortical dopamine neurons. Pharmacol. Rev. 35 : 53– 68 [Google Scholar]

- Barbas H , Pandya DN . 1991 . Patterns of connections of the prefrontal cortex in the rhesus monkey associated with cortical architecture. Frontal Lobe Function and Dysfunction HS Levin, H Eisenberg, AL Benton 35– 58 Oxford, UK: Oxford Univ. Press [Google Scholar]

- Bays PM , Husain M . 2008 . Dynamic shifts of limited working memory resources in human vision. Science 321 : 851– 54 [Google Scholar]

- Bentin S , Allison T , Puce A , Perez E , McCarthy G . 1996 . Electrophysiological studies of face perception in humans. J. Cogn. Neurosci. 8 : 551– 65 [Google Scholar]

- Bilder RM , Volavka J , Lachman HM , Grace AA . 2004 . The catechol- O -methyltransferase polymorphism: relations to the tonic-phasic dopamine hypothesis and neuropsychiatric phenotypes. Neuropsychopharmacology 29 : 1943– 61 [Google Scholar]

- Blumenfeld RS , Nomura EM , Gratton C , D'Esposito M . 2013 . Lateral prefrontal cortex is organized into parallel dorsal and ventral streams along the rostro-caudal axis. Cereb. Cortex 23 : 2457– 66 [Google Scholar]

- Braver TS , Cohen JD . 1999 . Dopamine, cognitive control, and schizophrenia: the gating model. Prog. Brain Res. 121 : 327– 49 [Google Scholar]

- Braver TS , Gray JR , Burgess GC . 2008 . Explaining the many varieties of working memory variation: dual mechanisms of cognitive control. Variation in Working Memory ARA Conway, C Jarrold, MJ Kane, A Miyake, JN Towse 76– 106 Oxford, UK: Oxford Univ. Press [Google Scholar]

- Brown RM , Crane AM , Goldman PS . 1979 . Regional distribution of monoamines in the cerebral cortex and subcortical structures of the rhesus monkey: concentrations and in vivo synthesis rates. Brain Res. 168 : 133– 50 [Google Scholar]

- Brozoski TJ , Brown RM , Rosvold HE , Goldman PS . 1979 . Cognitive deficit caused by regional depletion of dopamine in prefrontal cortex of rhesus monkey. Science 205 : 929– 32 [Google Scholar]

- Burgess PW , Dumontheil I , Gilbert SJ . 2007 . The gateway hypothesis of rostral prefrontal cortex (area 10) function. Trends Cogn. Sci. 11 : 290– 98 [Google Scholar]

- Buzsáki G , Draguhn A . 2004 . Neuronal oscillations in cortical networks. Science 304 : 1926– 29 [Google Scholar]

- Camps M , Cortés R , Gueye B , Probst A , Palacios JM . 1989 . Dopamine receptors in human brain: autoradiographic distribution of D 2 sites. Neuroscience 28 : 275– 90 [Google Scholar]

- Chao LL , Knight RT . 1998 . Contribution of human prefrontal cortex to delay performance. J. Cogn. Neurosci. 10 : 167– 77 [Google Scholar]

- Chatham CH , Badre D . 2013 . Working memory management and predicted utility. Front. Behav. Neurosci. 7 : 83 [Google Scholar]

- Chen AJ , Britton M , Turner GR , Vytlacil J , Thompson TW , D'Esposito M . 2012 . Goal-directed attention alters the tuning of object-based representations in extrastriate cortex. Front. Hum. Neurosci. 6 : 187 [Google Scholar]

- Christoff K , Ream JM , Geddes LP , Gabrieli JD . 2003 . Evaluating self-generated information: anterior prefrontal contributions to human cognition. Behav. Neurosci. 117 : 1161– 68 [Google Scholar]

- Christophel TB , Hebart MN , Haynes JD . 2012 . Decoding the contents of visual short-term memory from human visual and parietal cortex. J. Neurosci. 32 : 12983– 89 [Google Scholar]

- Cohen JD , Braver TS , Brown JW . 2002 . Computational perspectives on dopamine function in prefrontal cortex. Curr. Opin. Neurobiol. 12 : 223– 29 [Google Scholar]

- Constantinidis C , Franowicz MN , Goldman-Rakic PS . 2001 . The sensory nature of mnemonic representation in the primate prefrontal cortex. Nat. Neurosci. 4 : 311– 16 [Google Scholar]

- Cools R , D'Esposito M . 2009 . Dopaminergic modulation of flexible control in humans. Dopamine Handbook A Bjorklund, SB Dunnett, LL Iversen, SD Iversen 249– 60 Oxford, UK: Oxford Univ. Press [Google Scholar]

- Cools R , D'Esposito M . 2011 . Inverted-U-shaped dopamine actions on human working memory and cognitive control. Biol. Psychiatry 69 : e113– 25 A review of evidence from studies with experimental animals, healthy humans, and patients with Parkinson's disease, which demonstrate that optimum levels of dopamine are necessary for successful cognitive control. [Google Scholar]

- Cools R , Robbins TW . 2004 . Chemistry of the adaptive mind. Philos. Transact. A Math. Phys. Eng. Sci. 362 : 2871– 88 [Google Scholar]

- Cools R , Sheridan M , Jacobs E , D'Esposito M . 2007 . Impulsive personality predicts dopamine-dependent changes in frontostriatal activity during component processes of working memory. J. Neurosci. 27 : 5506– 14 [Google Scholar]

- Corbetta M , Miezin FM , Dobmeyer S , Shulman GL , Petersen SE . 1990 . Attentional modulation of neural processing of shape, color, and velocity in humans. Science 248 : 1556– 59 [Google Scholar]

- Courtney SM , Ungerleider LG , Keil K , Haxby JV . 1997 . Transient and sustained activity in a distributed neural system for human working memory. Nature 386 : 608– 11 [Google Scholar]

- Cowan N . 1995 . Attention and Memory: An Integrated Framework New York: Oxford Univ. Press [Google Scholar]

- Crespo-Garcia M , Pinal D , Cantero JL , Díaz F , Zurrón M , Atienza M . 2013 . Working memory processes are mediated by local and long-range synchronization of alpha oscillations. J. Cogn. Neurosci. 25 : 1343– 57 [Google Scholar]

- Cummings JL . 1993 . Frontal-subcortical circuits and human behavior. Arch. Neurol. 50 : 873– 80 [Google Scholar]

- Curtis CE , Rao VY , D'Esposito M . 2004 . Maintenance of spatial and motor codes during oculomotor delayed response tasks. J. Neurosci. 24 : 3944– 52 [Google Scholar]

- D'Ardenne K , McClure SM , Nystrom LE , Cohen JD . 2008 . BOLD responses reflecting dopaminergic signals in the human ventral tegmental area. Science 319 : 1264– 67 [Google Scholar]

- D'Esposito M . 2007 . From cognitive to neural models of working memory. Philos. Trans. R. Soc. B 362 : 761– 72 [Google Scholar]

- D'Esposito M , Postle B , Rypma B . 2000 . Prefrontal cortical contributions to working memory: evidence from event-related fMRI studies. Exp. Brain Res. 133 : 3– 11 [Google Scholar]

- Devinsky O , D'Esposito M . 2003 . Neurology of Cognitive and Behavioral Disorders New York: Oxford Univ. Press [Google Scholar]

- Duncan J . 2001 . An adaptive coding model of neural function in prefrontal cortex. Nat. Rev. Neurosci. 2 : 820– 29 [Google Scholar]

- Durstewitz D , Seamans JK . 2008 . The dual-state theory of prefrontal cortex dopamine function with relevance to catechol- O -methyltransferase genotypes and schizophrenia. Biol. Psychiatry 64 : 739– 49 [Google Scholar]

- Durstewitz D , Seamans JK , Sejnowski TJ . 2000a . Dopamine-mediated stabilization of delay-period activity in a network model of prefrontal cortex. J. Neurophysiol. 83 : 1733– 50 [Google Scholar]

- Durstewitz D , Seamans JK , Sejnowski TJ . 2000b . Neurocomputational models of working memory. Nat. Neurosci. 3 : Suppl. 1184– 91 [Google Scholar]

- Eichenbaum H . 2013 . Memory on time. Trends Cogn. Sci. 17 : 81– 88 [Google Scholar]

- Emrich SM , Riggall AC , Larocque JJ , Postle BR . 2013 . Distributed patterns of activity in sensory cortex reflect the precision of multiple items maintained in visual short-term memory. J. Neurosci. 33 : 6516– 23 [Google Scholar]

- Epstein R , Kanwisher N . 1998 . A cortical representation of the local visual environment. Nature 392 : 598– 601 [Google Scholar]

- Erickson MA , Maramara LA , Lisman J . 2010 . A single brief burst induces GluR1-dependent associative short-term potentiation: a potential mechanism for short-term memory. J. Cogn. Neurosci. 22 : 2530– 40 [Google Scholar]

- Ester EF , Anderson DE , Serences JT , Awh E . 2013 . A neural measure of precision in visual working memory. J. Cogn. Neurosci. 25 : 754– 61 A powerful demonstration, with MVPA encoding models, that the precision of neural representations in sensory cortex determines the precision of STM. [Google Scholar]

- Fell J , Axmacher N . 2011 . The role of phase synchronization in memory processes. Nat. Rev. Neurosci. 12 : 105– 18 [Google Scholar]

- Feredoes E , Heinen K , Weiskopf N , Ruff C , Driver J . 2011 . Causal evidence for frontal involvement in memory target maintenance by posterior brain areas during distracter interference of visual working memory. Proc. Natl. Acad. Sci. USA 108 : 17510– 15 [Google Scholar]

- Fiebach CJ , Rissman J , D'Esposito M . 2006 . Modulation of inferotemporal cortex activation during verbal working memory maintenance. Neuron 51 : 251– 61 [Google Scholar]

- Frank MJ , Badre D . 2012 . Mechanisms of hierarchical reinforcement learning in corticostriatal circuits 1: computational analysis. Cereb. Cortex 22 : 509– 26 [Google Scholar]

- Frank MJ , Loughry B , O'Reilly RC . 2001 . Interactions between frontal cortex and basal ganglia in working memory: a computational model. Cogn. Affect. Behav. Neurosci. 1 : 137– 60 [Google Scholar]

- Frank MJ , O'Reilly RC . 2006 . A mechanistic account of striatal dopamine function in human cognition: psychopharmacological studies with cabergoline and haloperidol. Behav. Neurosci. 120 : 497– 517 [Google Scholar]

- Freedman DJ , Riesenhuber M , Poggio T , Miller EK . 2001 . Categorical representation of visual stimuli in the primate prefrontal cortex. Science 291 : 312– 16 [Google Scholar]

- Freedman DJ , Riesenhuber M , Poggio T , Miller EK . 2003 . A comparison of primate prefrontal and inferior temporal cortices during visual categorization. J. Neurosci. 23 : 5235– 46 [Google Scholar]

- Fries P . 2005 . A mechanism for cognitive dynamics: neuronal communication through neuronal coherence. Trends Cogn. Sci. 9 : 474– 80 [Google Scholar]

- Funahashi S , Bruce CJ , Goldman-Rakic PS . 1989 . Mnemonic coding of visual space in the monkey's dorsolateral prefrontal cortex. J. Neurophysiol. 61 : 331– 49 [Google Scholar]

- Fuster JM . 1990 . Prefrontal cortex and the bridging of temporal gaps in the perception-action cycle. Ann. N. Y. Acad. Sci. 608 : 318– 29 discussion 330– 36 [Google Scholar]

- Fuster JM . 2004 . Upper processing stages of the perception-action cycle. Trends Cogn. Sci. 8 : 143– 45 [Google Scholar]

- Fuster JM . 2008 . The Prefrontal Cortex Oxford, UK: Elsevier [Google Scholar]

- Fuster JM , Alexander GE . 1971 . Neuron activity related to short-term memory. Science 173 : 652– 54 [Google Scholar]

- Fuster JM , Bauer RH , Jervey JP . 1985 . Functional interactions between inferotemporal and prefrontal cortex in a cognitive task. Brain Res. 330 : 299– 307 [Google Scholar]

- Gazzaley A , Cooney JW , McEvoy K , Knight RT , D'Esposito M . 2005 . Top-down enhancement and suppression of the magnitude and speed of neural activity. J. Cogn. Neurosci. 17 : 507– 17 A study using fMRI and ERP that provides converging evidence that both the magnitude of neural activity and the speed of neural processing are modulated by top-down influences. [Google Scholar]

- Gazzaley A , Rissman J , D'Esposito M . 2004 . Functional connectivity during working memory maintenance. Cogn. Affect. Behav. Neurosci. 4 : 580– 99 [Google Scholar]

- Goldman-Rakic PS . 1987 . Circuitry of the prefrontal cortex and the regulation of behavior by representational knowledge. Handbook of Physiology: The Nervous System F Plum, VB Mountcastle 373– 417 Bethesda, MD: Am. Physiol. Soc. [Google Scholar]

- Goldman-Rakic PS . 1995 . Cellular basis of working memory. Neuron 14 : 477– 85 [Google Scholar]

- Goldman-Rakic PS , Lidow MS , Gallager DW . 1990 . Overlap of dopaminergic, adrenergic, and serotoninergic receptors and complementarity of their subtypes in primate prefrontal cortex. J. Neurosci. 10 : 2125– 38 [Google Scholar]

- Grace AA . 2000 . The tonic/phasic model of dopamine system regulation and its implications for understanding alcohol and psychostimulant craving. Addiction 95 : Suppl. 2 S119– 28 [Google Scholar]

- Hamidi M , Tononi G , Postle BR . 2008 . Evaluating frontal and parietal contributions to spatial working memory with repetitive transcranial magnetic stimulation. Brain Res. 1230 : 202– 10 [Google Scholar]

- Hamidi M , Tononi G , Postle BR . 2009 . Evaluating the role of prefrontal and parietal cortices in memory-guided response with repetitive transcranial magnetic stimulation. Neuropsychologia 47 : 295– 302 [Google Scholar]

- Han X , Berg AC , Oh H , Samaras D , Leung HC . 2013 . Multi-voxel pattern analysis of selective representation of visual working memory in ventral temporal and occipital regions. NeuroImage 73 : 8– 15 [Google Scholar]

- Harrison SA , Tong F . 2009 . Decoding reveals the contents of visual working memory in early visual areas. Nature 458 : 632– 35 [Google Scholar]

- Haxby JV , Gobbini MI , Furey ML , Ishai A , Schouten JL , Pietrini P . 2001 . Distributed and overlapping representations of faces and objects in ventral temporal cortex. Science 293 : 2425– 30 [Google Scholar]

- Higo T , Mars RB , Boorman ED , Buch ER , Rushworth MFS . 2011 . Distributed and causal influence of frontal operculum in task control. Proc. Natl. Acad. Sci. USA 108 : 4230– 35 [Google Scholar]

- Hillyard SA , Hink RF , Schwent VL , Picton TW . 1973 . Electrical signs of selective attention in the human brain. Science 182 : 177– 80 [Google Scholar]

- Itskov V , Hansel D , Tsodyks M . 2011 . Short-term facilitation may stabilize parametric working memory trace. Front. Comput. Neurosci. 5 : 40 [Google Scholar]

- Jerde TA , Merriam EP , Riggall AC , Hedges JH , Curtis CE . 2012 . Prioritized maps of space in human frontoparietal cortex. J. Neurosci. 32 : 17382– 90 [Google Scholar]

- Kanwisher N , McDermott J , Chun MM . 1997 . The fusiform face area: a module in human extrastriate cortex specialized for face perception. J. Neurosci. 17 : 4302– 11 [Google Scholar]

- Kimberg DY , D'Esposito M . 2003 . Cognitive effects of the dopamine receptor agonist pergolide. Neuropsychologia 41 : 1020– 27 [Google Scholar]

- Kimberg DY , D'Esposito M , Farah MJ . 1997 . Effects of bromocriptine on human subjects depend on working memory capacity. NeuroReport 8 : 3581– 85 [Google Scholar]

- Koechlin E , Ody C , Kouneiher F . 2003 . The architecture of cognitive control in the human prefrontal cortex. Science 302 : 1181– 85 [Google Scholar]

- Koechlin E , Summerfield C . 2007 . An information theoretical approach to prefrontal executive function. Trends Cogn. Sci. 11 : 229– 35 [Google Scholar]

- Kouneiher F , Charron S , Koechlin E . 2009 . Motivation and cognitive control in the human prefrontal cortex. Nat. Neurosci. 12 : 939– 45 [Google Scholar]

- Kubota K , Niki H . 1971 . Prefrontal cortical unit activity and delayed alternation performance in monkeys. J. Neurophysiol. 34 : 337– 47 [Google Scholar]

- LaRocque JJ , Lewis-Peacock JA , Drysdale AT , Oberauer K , Postle BR . 2013 . Decoding attended information in short-term memory: an EEG study. J. Cogn. Neurosci. 25 : 127– 42 [Google Scholar]

- Lee SH , Kravitz DJ , Baker CI . 2013 . Goal-dependent dissociation of visual and prefrontal cortices during working memory. Nat. Neurosci. 16 : 997– 99 [Google Scholar]

- Lee TG , D'Esposito M . 2012 . The dynamic nature of top-down signals originating from prefrontal cortex: a combined fMRI-TMS study. J. Neurosci. 32 : 15458– 66 [Google Scholar]

- Lewis-Peacock JA , Drysdale AT , Oberauer K , Postle BR . 2012 . Neural evidence for a distinction between short-term memory and the focus of attention. J. Cogn. Neurosci. 24 : 61– 79 [Google Scholar]

- Lewis-Peacock JA , Postle BR . 2008 . Temporary activation of long-term memory supports working memory. J. Neurosci. 28 : 8765– 71 [Google Scholar]

- Lewis-Peacock JA , Postle BR . 2012 . Decoding the internal focus of attention. Neuropsychologia 50 : 470– 78 [Google Scholar]

- Liebe S , Hoerzer GM , Logothetis NK , Rainer G . 2012 . Theta coupling between V4 and prefrontal cortex predicts visual short-term memory performance. Nat. Neurosci. 15 : 456– 62 S1– 2 [Google Scholar]

- Luciana M , Collins PF . 1997 . Dopaminergic modulation of working memory for spatial but not object cues in normal humans. J. Cogn. Neurosci. 9 : 330– 47 [Google Scholar]

- Luck SJ , Vogel EK . 1997 . The capacity of visual working memory for features and conjunctions. Nature 390 : 279– 81 [Google Scholar]

- Luck SJ , Vogel EK . 2013 . Visual working memory capacity: from psychophysics and neurobiology to individual differences. Trends Cogn. Sci. 17 : 391– 400 An authoritative summary of evidence supporting slots models of STM capacity limits. [Google Scholar]

- Ma WJ , Husain M , Bays PM . 2014 . Changing concepts of working memory. Nat. Neurosci. 17 : 347– 56 A comprehensive review of psychophysical and neural evidence for single-resource models of STM capacity limits. [Google Scholar]

- Magnussen S . 2000 . Low-level memory processes in vision. Trends Neurosci. 23 : 247– 51 [Google Scholar]

- Magnussen S , Greenlee MW . 1999 . The psychophysics of perceptual memory. Psychol. Res. 62 : 81– 92 [Google Scholar]

- McElree B . 1998 . Attended and non-attended states in working memory: accessing categorized structures. J. Mem. Lang. 38 : 225– 52 [Google Scholar]

- McElree B . 2006 . Accessing recent events. Psychol. Learn. Motiv. 46 : 155– 200 [Google Scholar]

- Meyer-Lindenberg A , Kohn PD , Kolachana B , Kippenhan S , McInerney-Leo A . et al. 2005 . Midbrain dopamine and prefrontal function in humans: interaction and modulation by COMT genotype. Nat. Neurosci. 8 : 594– 96 [Google Scholar]

- Meyers EM , Freedman DJ , Kreiman G , Miller EK , Poggio T . 2008 . Dynamic population coding of category information in inferior temporal and prefrontal cortex. J. Neurophysiol. 100 : 1407– 19 [Google Scholar]

- Miller BT , D'Esposito M . 2005 . Searching for “the top” in top-down control. Neuron 48 : 535– 38 [Google Scholar]

- Miller BT , Vytlacil J , Fegen D , Pradhan S , D'Esposito M . 2011 . The prefrontal cortex modulates category selectivity in human extrastriate cortex. J. Cogn. Neurosci. 23 : 1– 10 [Google Scholar]

- Miller EK , Cohen JD . 2001 . An integrative theory of prefrontal cortex function. Annu. Rev. Neurosci. 24 : 167– 202 An influential model of how the PFC implements top-down control for the flexible control of behavior. [Google Scholar]

- Miller EK , Erickson CA , Desimone R . 1996 . Neural mechanisms of visual working memory in prefrontal cortex of the macaque. J. Neurosci. 16 : 5154– 67 [Google Scholar]

- Miller GA , Galanter E , Pribham KH . 1960 . Plans and the Structure of Behavior New York: Holt, Rinehart and Winston [Google Scholar]

- Mongillo G , Barak O , Tsodyks M . 2008 . Synaptic theory of working memory. Science 319 : 1543– 46 [Google Scholar]

- Moran J , Desimone R . 1985 . Selective attention gates visual processing in the extrastriate cortex. Science 229 : 782– 84 [Google Scholar]

- Muller U , Von Cramon DY , Pollmann S . 1998 . D1- versus D2-receptor modulation of visuospatial working memory in humans. J. Neurosci. 18 : 2720– 28 [Google Scholar]

- Nelissen N , Stokes M , Nobre AC , Rushworth MF . 2013 . Frontal and parietal cortical interactions with distributed visual representations during selective attention and action selection. J. Neurosci. 33 : 16443– 58 [Google Scholar]

- Oberauer K . 2001 . Removing irrelevant information from working memory: a cognitive aging study with the modified Sternberg task. J. Exp. Psychol.: Learn. Mem. Cogn. 27 : 948– 57 [Google Scholar]

- Oberauer K . 2002 . Access to information in working memory: exploring the focus of attention. J. Exp. Psychol.: Learn. Mem. Cogn. 28 : 411– 21 [Google Scholar]

- Oberauer K . 2005 . Control of the contents of working memory—a comparison of two paradigms and two age groups. J. Exp. Psychol.: Learn. Mem. Cogn. 31 : 714– 28 [Google Scholar]

- Oberauer K . 2009 . Design for a working memory. Psychol. Learn. Motiv. 51 : 45– 100 [Google Scholar]

- Oberauer K . 2013 . The focus of attention in working memory—from metaphors to mechanisms. Front. Hum. Neurosci. 7 : 673 [Google Scholar]

- Palva JM , Monto S , Kulashekhar S , Palva S . 2010 . Neuronal synchrony reveals working memory networks and predicts individual memory capacity. Proc. Natl. Acad. Sci. USA 107 : 7580– 85 [Google Scholar]

- Pesaran B , Pezaris JS , Sahani M , Mitra PP , Andersen RA . 2002 . Temporal structure in neuronal activity during working memory in macaque parietal cortex. Nat. Neurosci. 5 : 805– 11 [Google Scholar]

- Petrides M . 2000 . Dissociable roles of mid-dorsolateral prefrontal and anterior inferotemporal cortex in visual working memory. J. Neurosci. 20 : 7496– 503 [Google Scholar]

- Postle BR . 2006 . Working memory as an emergent property of the mind and brain. Neuroscience 139 : 23– 38 [Google Scholar]

- Postle BR . 2011 . What underlies the ability to guide action with spatial information that is no longer present in the environment?. Spatial Working Memory A Vandierendonck, A Szmalec 87– 101 Hove, UK: Psychology [Google Scholar]

- Postle BR . 2015 . The cognitive neuroscience of visual short-term memory. Curr. Opin. Behav. Sci. 1 40– 46 A summary of the novel insights provided by MVPA, including the possibility that elevated activity reflects the focus of attention rather than working memory storage per se. [Google Scholar]

- Postle BR , Idzikowski C , Sala SD , Logie RH , Baddeley AD . 2006 . The selective disruption of spatial working memory by eye movements. Q. J. Exp. Psychol. ( Hove ) 59 : 100– 20 [Google Scholar]

- Pribram KH , Ahumada A , Hartog J , Roos L . 1964 . A progress report on the neurological processes disturbed by frontal lesions in primates. The Frontal Cortex and Behavior JM Warren, K Akert 28– 55 New York: McGraw-Hill [Google Scholar]

- Pycock CJ , Kerwin RW , Carter CJ . 1980 . Effect of lesion of cortical dopamine terminals on subcortical dopamine receptors in rats. Nature 286 : 74– 76 [Google Scholar]

- Ramnani N , Owen AM . 2004 . Anterior prefrontal cortex: insights into function from anatomy and neuroimaging. Nat. Rev. Neurosci. 5 : 184– 94 [Google Scholar]

- Riggall AC , Postle BR . 2012 . The relationship between working memory storage and elevated activity as measured with functional magnetic resonance imaging. J. Neurosci. 32 : 12990– 98 [Google Scholar]

- Rigotti M , Barak O , Warden MR , Wang X-J , Daw ND . et al. 2013 . The importance of mixed selectivity in complex cognitive tasks. Nature 497 : 585– 90 [Google Scholar]

- Rissman J , Gazzaley A , D'Esposito M . 2004 . Measuring functional connectivity during distinct stages of a cognitive task. NeuroImage 23 : 752– 63 [Google Scholar]

- Romo R , Brody CD , Hernández A , Lemus L . 1999 . Neuronal correlates of parametric working memory in the prefrontal cortex. Nature 399 : 470– 73 [Google Scholar]

- Roux F , Uhlhaas PJ . 2014 . Working memory and neural oscillations: alpha–gamma versus theta–gamma codes for distinct WM information?. Trends Cogn. Sci. 18 : 16– 25 [Google Scholar]

- Ruff CC . 2013 . Sensory processing: Who's in (top-down) control?. Ann. N. Y. Acad. Sci. 1296 88– 107 [Google Scholar]

- Saalmann YB , Pinsk MA , Wang L , Li X , Kastner S . 2012 . The pulvinar regulates information transmission between cortical areas based on attention demands. Science 337 : 753– 56 [Google Scholar]

- Samson Y , Wu JJ , Friedman AH , Davis JN . 1990 . Catecholaminergic innervation of the hippocampus in the cynomolgus monkey. J. Comp. Neurol. 298 : 250– 63 [Google Scholar]

- Sauseng P , Klimesch W , Schabus M , Doppelmayr M . 2005 . Fronto-parietal EEG coherence in theta and upper alpha reflect central executive functions of working memory. Int. J. Psychophysiol. 57 : 97– 103 [Google Scholar]

- Sawaguchi T . 2001 . The effects of dopamine and its antagonists on directional delay-period activity of prefrontal neurons in monkeys during an oculomotor delayed-response task. Neurosci. Res. 41 : 115– 28 [Google Scholar]

- Sawaguchi T , Goldman-Rakic PS . 1991 . D1 dopamine receptors in prefrontal cortex: involvement in working memory. Science 251 : 947– 50 [Google Scholar]

- Serences JT , Ester EF , Vogel EK , Awh E . 2009 . Stimulus-specific delay activity in human primary visual cortex. Psychol. Sci. 20 : 207– 14 [Google Scholar]

- Shallice T . 1982 . Specific impairments of planning. Philos. Trans. R. Soc. B 298 : 199– 209 [Google Scholar]

- Shohamy D , Adcock RA . 2010 . Dopamine and adaptive memory. Trends Cogn. Sci. 14 : 464– 72 [Google Scholar]

- Silvanto J , Cattaneo Z . 2010 . Transcranial magnetic stimulation reveals the content of visual short-term memory in the visual cortex. NeuroImage 50 : 1683– 89 [Google Scholar]

- Singer W . 2009 . Distributed processing and temporal codes in neuronal networks. Cogn. Neurodyn. 3 : 189– 96 [Google Scholar]

- Smith EE , Jonides J . 1999 . Storage and executive processes of the frontal lobes. Science 283 : 1657– 61 [Google Scholar]

- Sreenivasan KK , Curtis CE , D'Esposito M . 2014a . Revisiting the role of persistent neural activity during working memory. Trends Cogn. Sci. 18 : 82– 89 A review of recent neural evidence for sensorimotor-recruitment models and for non-activity-based mechanisms for working memory storage. [Google Scholar]

- Sreenivasan KK , Vytlacil J , D'Esposito M . 2014b . Distributed and dynamic storage of working memory stimulus information in extrastriate cortex. J. Cogn. Neurosci. 26 : 1141– 53 [Google Scholar]

- Stokes MG , Kusunoki M , Sigala N , Nili H , Gaffan D , Duncan J . 2013 . Dynamic coding for cognitive control in prefrontal cortex. Neuron 78 : 364– 75 [Google Scholar]

- Sugase-Miyamoto Y , Liu Z , Wiener MC , Optican LM , Richmond BJ . 2008 . Short-term memory trace in rapidly adapting synapses of inferior temporal cortex. PLOS Comput. Biol. 4 : e1000073 [Google Scholar]

- Tallon-Baudry C , Kreiter A , Bertrand O . 1999 . Sustained and transient oscillatory responses in the gamma and beta bands in a visual short-term memory task in humans. Vis. Neurosci. 16 : 449– 59 [Google Scholar]

- Theeuwes J , Olivers CN , Chizk CL . 2005 . Remembering a location makes the eyes curve away. Psychol. Sci. 16 : 196– 99 [Google Scholar]

- Venkatraman V , Rosati AG , Taren AA , Huettel SA . 2009 . Resolving response, decision, and strategic control: evidence for a functional topography in dorsomedial prefrontal cortex. J. Neurosci. 29 : 13158– 64 [Google Scholar]

- Verstynen TD , Badre D , Jarbo K , Schneider W . 2012 . Microstructural organizational patterns in the human corticostriatal system. J. Neurophysiol. 107 : 2984– 95 [Google Scholar]

- Vogel EK , Woodman GF , Luck SJ . 2001 . Storage of features, conjunctions and objects in visual working memory. J. Exp. Psychol.: Hum. Percept. Perform. 27 : 92– 114 [Google Scholar]

- Wallis JD , Anderson KC , Miller EK . 2001 . Single neurons in prefrontal cortex encode abstract rules. Nature 411 : 953– 56 [Google Scholar]

- Wang M , Vijayraghavan S , Goldman-Rakic PS . 2004 . Selective D2 receptor actions on the functional circuitry of working memory. Science 303 : 853– 56 [Google Scholar]

- Wang XJ . 1999 . Synaptic basis of cortical persistent activity: the importance of NMDA receptors to working memory. J. Neurosci. 19 : 9587– 603 [Google Scholar]

- Wang XJ . 2001 . Synaptic reverberation underlying mnemonic persistent activity. Trends Neurosci. 24 : 455– 63 [Google Scholar]

- Warden MR , Miller EK . 2010 . Task-dependent changes in short-term memory in the prefrontal cortex. J. Neurosci. 30 : 15801– 10 [Google Scholar]

- Williams SM , Goldman-Rakic PS . 1993 . Characterization of the dopaminergic innervation of the primate frontal cortex using a dopamine-specific antibody. Cereb. Cortex 3 : 199– 222 [Google Scholar]

- Yeterian EH , Pandya DN , Tomaiuolo F , Petrides M . 2012 . The cortical connectivity of the prefrontal cortex in the monkey brain. Cortex 48 : 58– 81 [Google Scholar]

- Zaksas D , Bisley JW , Pasternak T . 2001 . Motion information is spatially localized in a visual working-memory task. J. Neurophysiol. 86 : 912– 21 [Google Scholar]

- Zanto TP , Rubens MT , Thangavel A , Gazzaley A . 2011 . Causal role of the prefrontal cortex in top-down modulation of visual processing and working memory. Nat. Neurosci. 14 : 656– 61 [Google Scholar]

- Article Type: Review Article

Most Read This Month

Most cited most cited rss feed, job burnout, executive functions, social cognitive theory: an agentic perspective, on happiness and human potentials: a review of research on hedonic and eudaimonic well-being, sources of method bias in social science research and recommendations on how to control it, mediation analysis, missing data analysis: making it work in the real world, grounded cognition, personality structure: emergence of the five-factor model, motivational beliefs, values, and goals.

SYSTEMATIC REVIEW article

A systematic review on predictors of working memory training responsiveness in healthy older adults: methodological challenges and future directions.

- 1 Department of Medical Psychology | Neuropsychology & Gender Studies, Center for Neuropsychological Diagnostics and Intervention (CeNDI), Faculty of Medicine and University Hospital Cologne, University of Cologne, Cologne, Germany

- 2 Department I of Internal Medicine, Center for Integrated Oncology Aachen Bonn Cologne Duesseldorf, Faculty of Medicine and University Hospital of Cologne, University of Cologne, Cologne, Germany

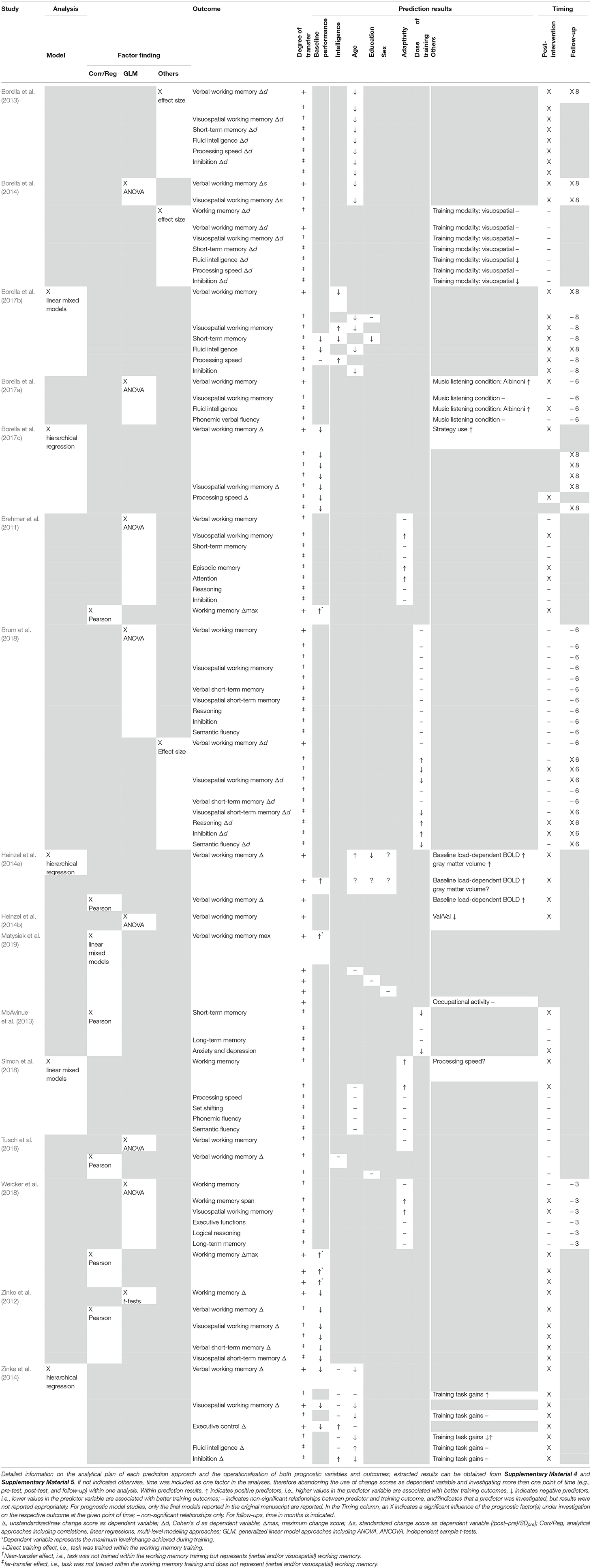

Background: Research on predictors of working memory training responsiveness, which could help tailor cognitive interventions individually, is a timely topic in healthy aging. However, the findings are highly heterogeneous, reporting partly conflicting results following a broad spectrum of methodological approaches to answer the question “who benefits most” from working memory training.

Objective: The present systematic review aimed to systematically investigate prognostic factors and models for working memory training responsiveness in healthy older adults.

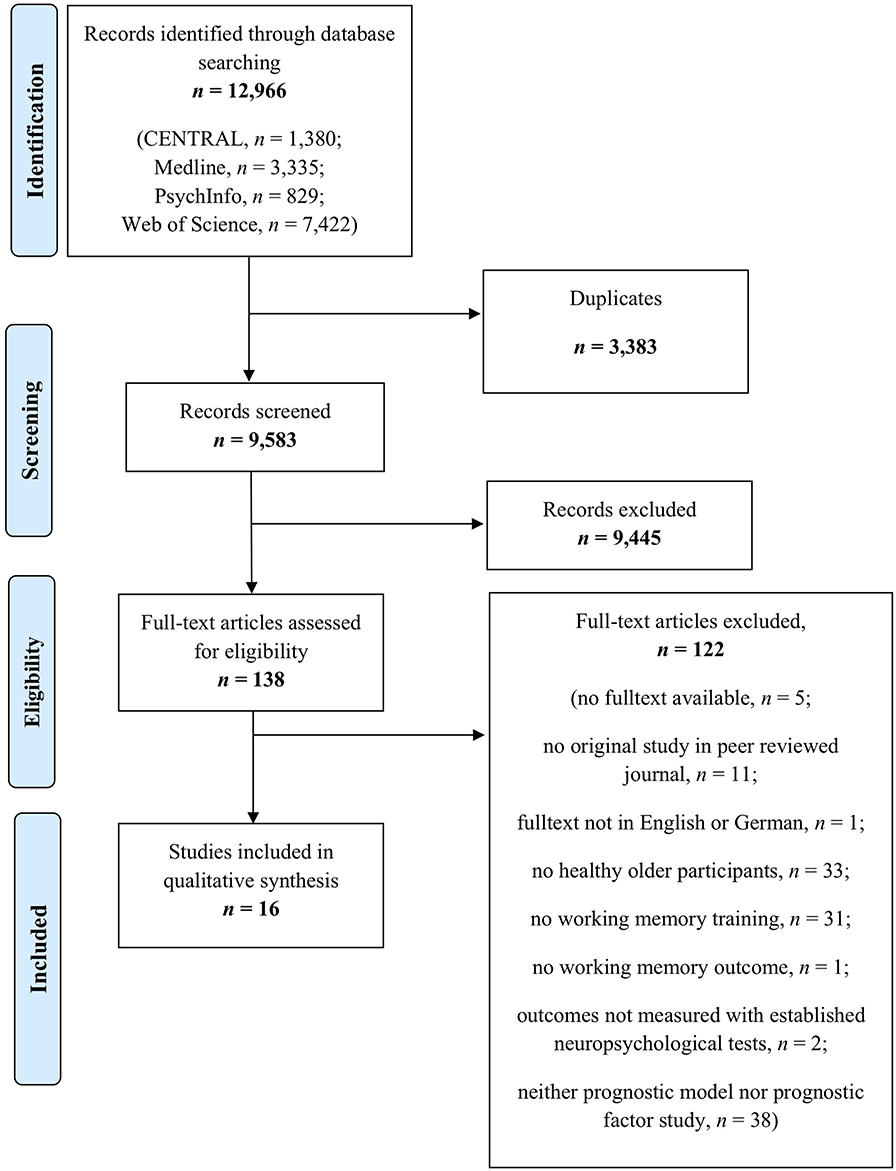

Method: Four online databases were searched up to October 2019 (MEDLINE Ovid, Web of Science, CENTRAL, and PsycINFO). The inclusion criteria for full texts were publication in a peer-reviewed journal in English/German, inclusion of healthy older individuals aged ≥55 years without any neurological and/or psychiatric diseases including cognitive impairment, and the investigation of prognostic factors and/or models for training responsiveness after targeted working memory training in terms of direct training effects, near-transfer effects to verbal and visuospatial working memory as well as far-transfer effects to other cognitive domains and behavioral variables. The study design was not limited to randomized controlled trials.

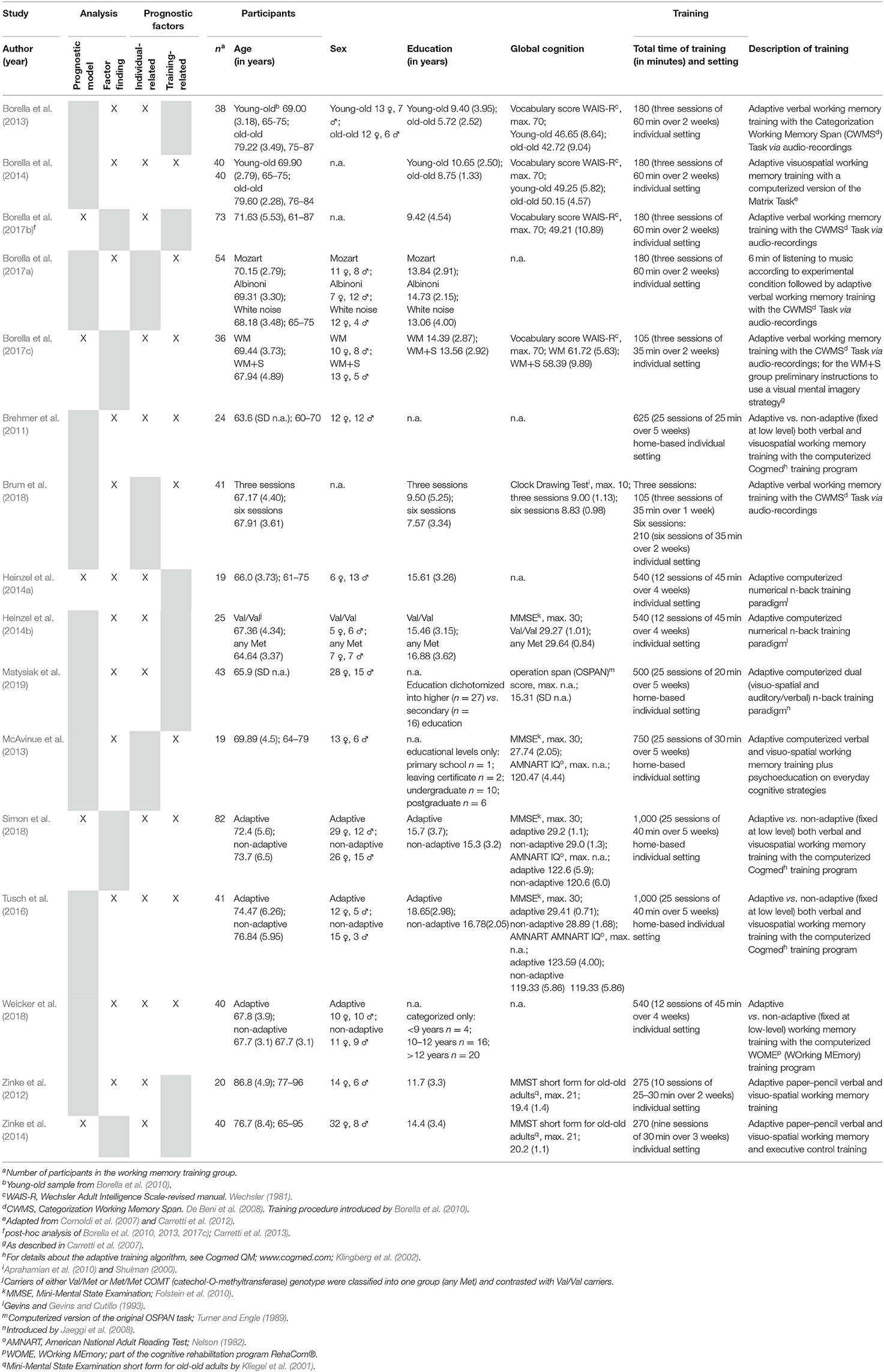

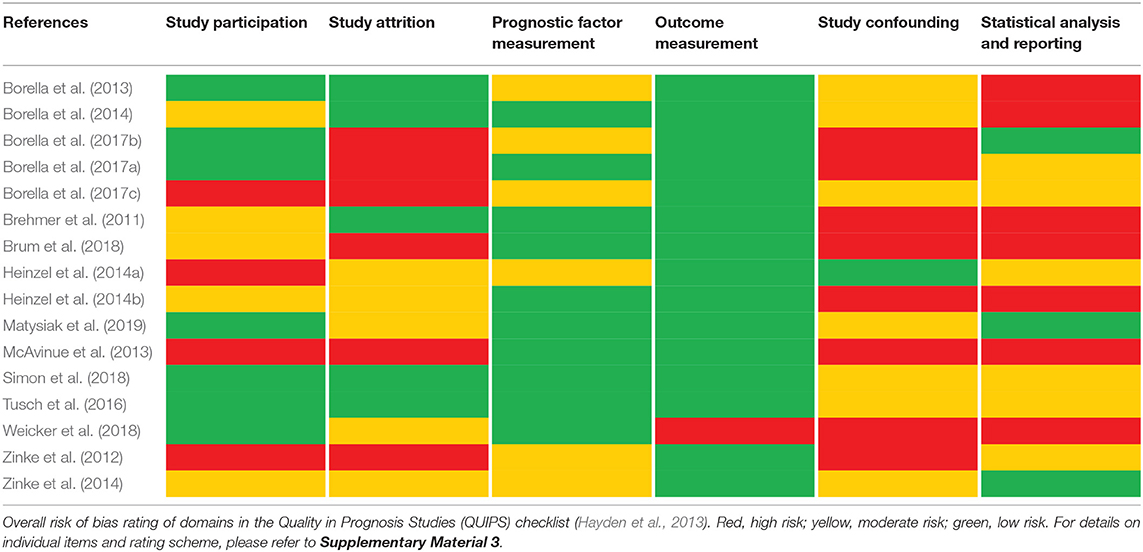

Results: A total of 16 studies including n = 675 healthy older individuals with a mean age of 63.0–86.8 years were included in this review. Within these studies, five prognostic model approaches and 18 factor finding approaches were reported. Risk of bias was assessed using the Quality in Prognosis Studies checklist, indicating that important information, especially regarding the domains study attrition, study confounding, and statistical analysis and reporting, was lacking throughout many of the investigated studies. Age, education, intelligence, and baseline performance in working memory or other cognitive domains were frequently investigated predictors across studies.

Conclusions: Given the methodological shortcomings of the included studies, no clear conclusions can be drawn, and emerging patterns of prognostic effects will have to survive sound methodological replication in future attempts to promote precision medicine approaches in the context of working memory training. Methodological considerations are discussed, and our findings are embedded to the cognitive aging literature, considering, for example, the cognitive reserve framework and the compensation vs. magnification account. The need for personalized cognitive prevention and intervention methods to counteract cognitive decline in the aging population is high and the potential enormous.

Registration: PROSPERO, ID CRD42019142750.

Introduction

The promotion of healthy aging constitutes a major goal given the demographic change that the world's population is facing ( Parish et al., 2019 ). One key aspect of healthy aging is the maintenance of cognitive functions by preventing or delaying the onset of clinically relevant cognitive dysfunction or even reversing age-related cognitive decline ( Lustig et al., 2009 ). Cognitive decline is one of the most feared aspects in aging ( Deary et al., 2009 ), as it reduces the quality of life of both the aging individual and his/her relatives and increases the burden on care providers and the public healthcare system. Decline of executive functions, working memory, processing speed, and memory—cognitive functions that are essential for everyday functioning—is the most prominent cognitive alteration in healthy aging ( Paraskevoudi et al., 2018 ). Especially working memory, a capacity-limited system for short-term storage and manipulation of information, is of fundamental importance for general cognitive functioning and is seen as a key function and processing resource for other cognitive abilities ( Salthouse, 1990 ; Chai et al., 2018 ).

Cognitive training interventions, as a non-pharmacological intervention and prevention method, have gained increased scientific interest ( Lustig et al., 2009 ). A recent meta-analysis of Chiu et al. (2017) on broad cognitive interventions in healthy older adults clearly indicated the potential of cognitive interventions to counteract cognitive decline. However, some issues such as the degree of transfer to untrained tasks and long-term effects remain a matter of debate. In this context, working memory has become a main target for cognitive training interventions. The role of working memory as a processing resource for other cognitive abilities ( Salthouse, 1990 ; Chai et al., 2018 ) implies that working memory improvements after targeted working memory training (WMT) might naturally lead to positive transfer effects to other cognitive functions and even fluid intelligence ( Au et al., 2015 ). Despite a general consensus regarding the effectiveness of targeted WMT regarding direct training effects (i.e., effects in trained working memory tasks over the course of training) and near-transfer effects (i.e., effects in untrained working memory tasks), evidence on far-transfer effects (i.e., effects in untrained domains) for different populations including healthy older adults has not convincingly been shown (for recent meta-analyses see e.g., Melby-Lervåg et al., 2016 ; Weicker et al., 2016 ; Soveri et al., 2017 ; Sala et al., 2019 ; Teixeira-Santos et al., 2019 ). Given those heterogeneous results concerning effects after WMT, identifying modifying, so-called prognostic or moderating, factors (including both individual- and training-related characteristics) of WMT responsiveness seems highly relevant.